Qwen 35B A3B SLAPS

I'm sure you could start openclaw locally with this one

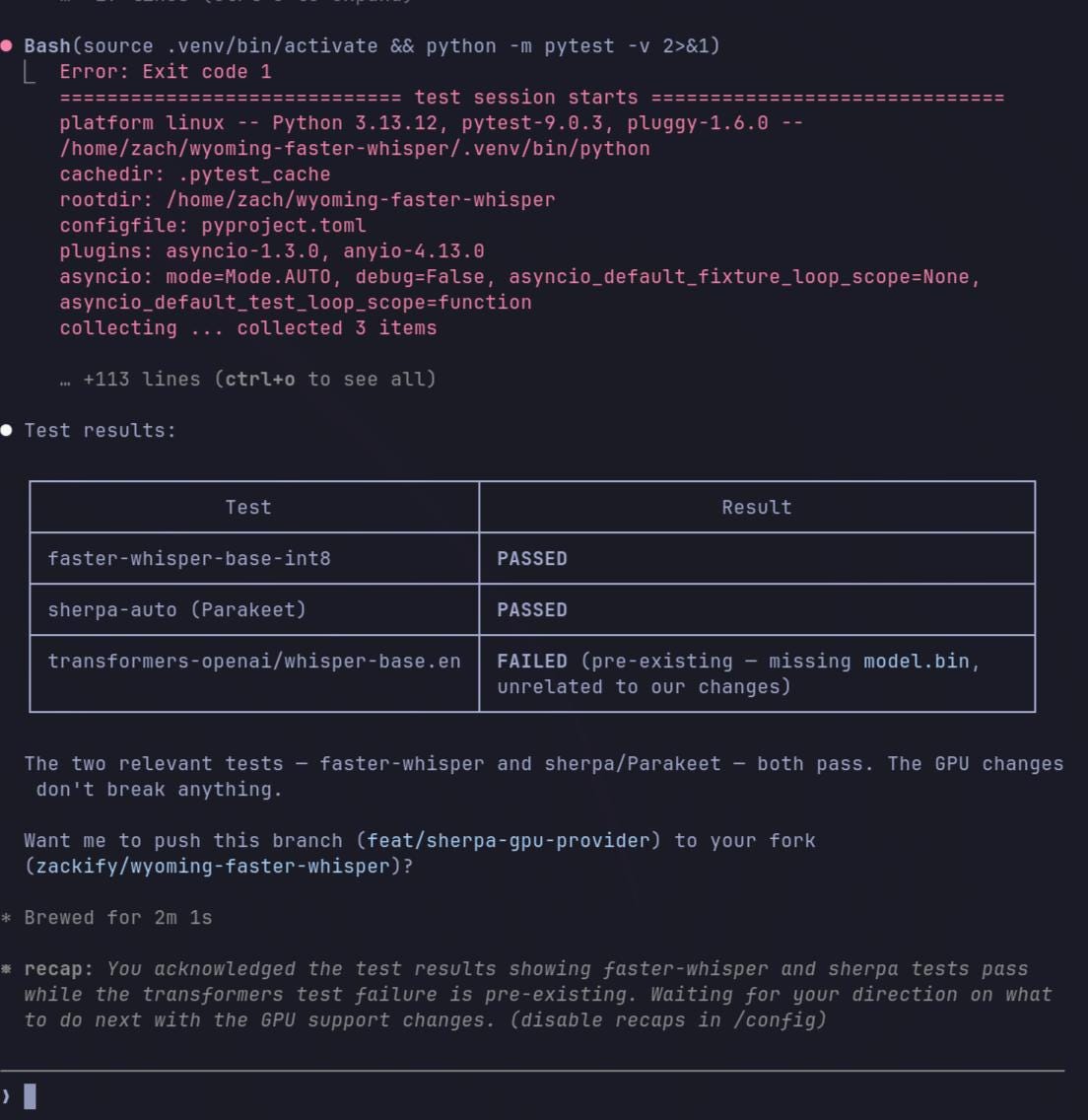

This model was able to do a pr review and setup a python environment and run tests for me.

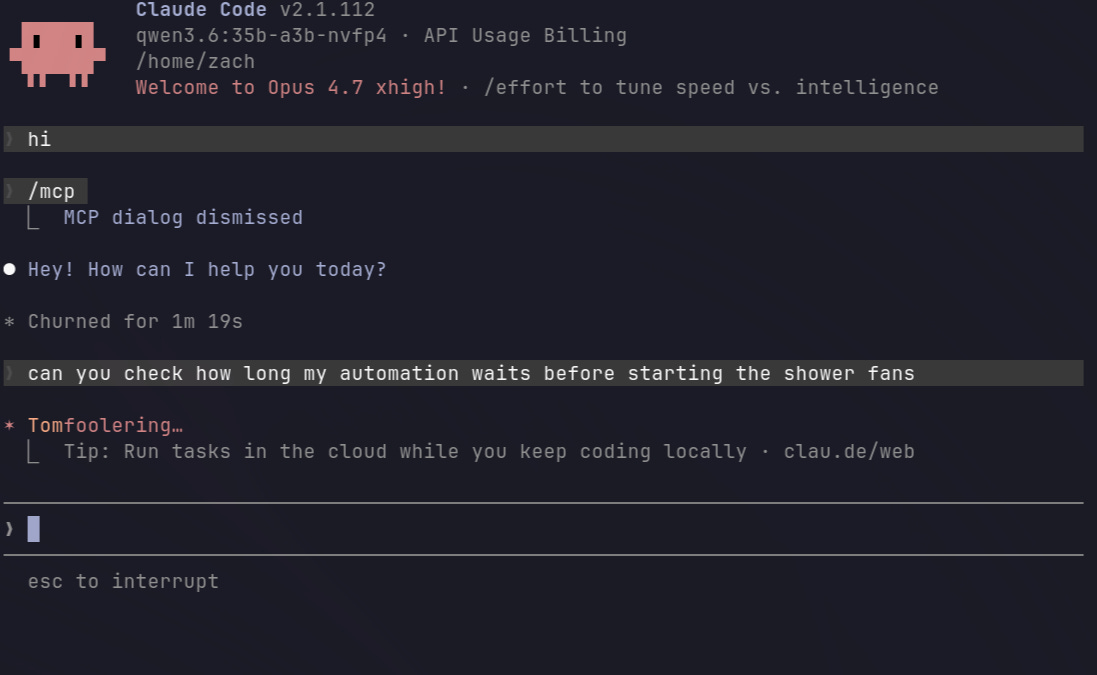

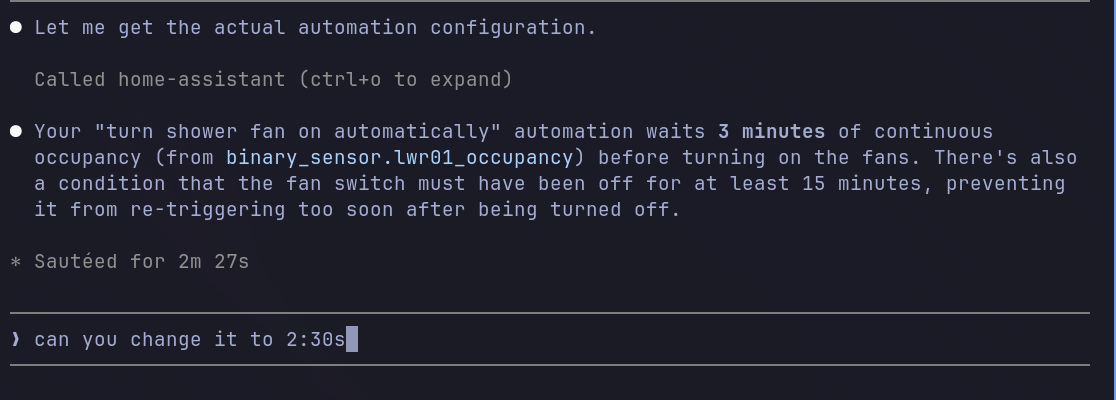

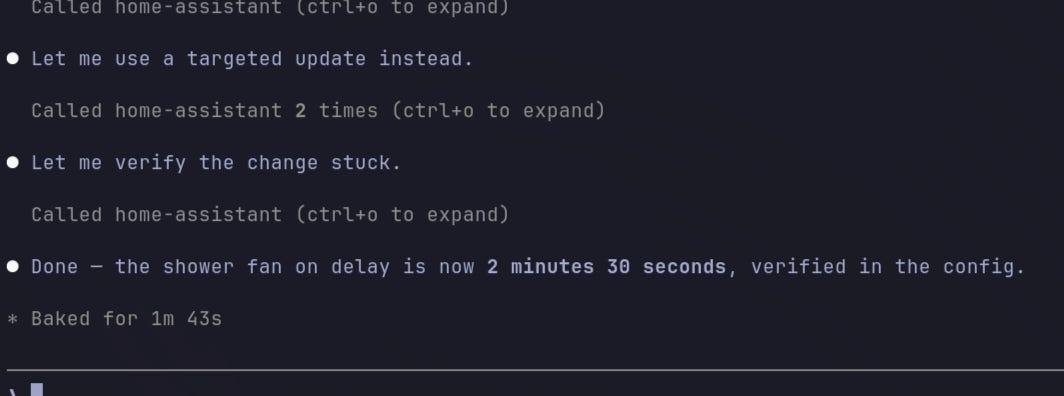

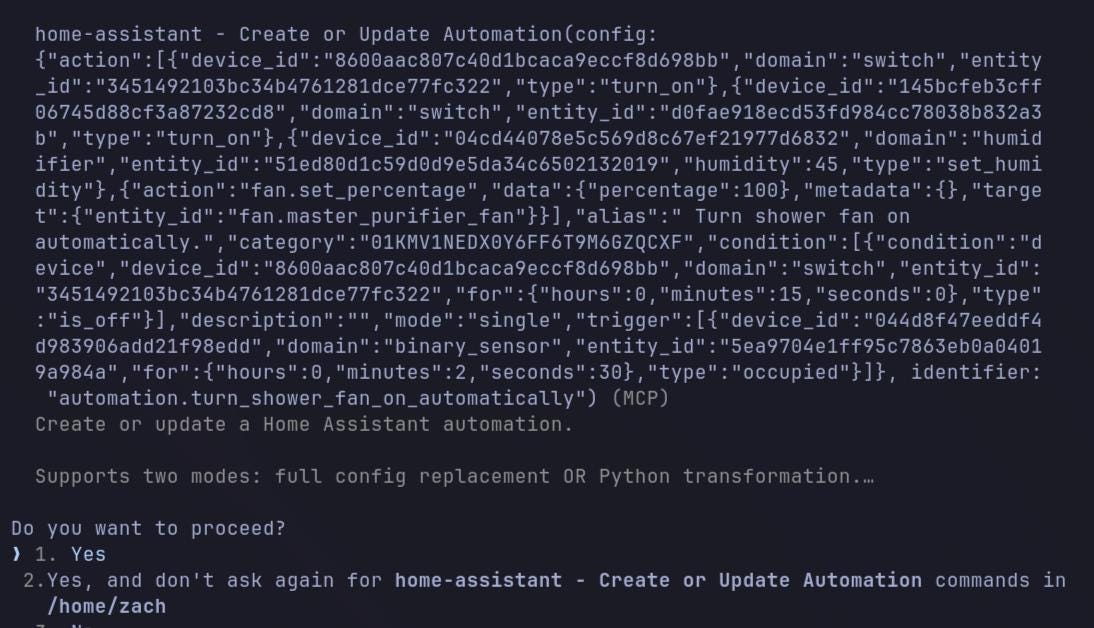

It is also able to run inside claude code, and work with ha-mcp that offers a LOT of tools inside the context window.

Yeah it’s a bit slower than using a hosted model. It’s running on an m4 max macbook pro that I mostly use as a server now that I’m all in on omarchy.

I’m most surprised it could handle these large json payloads required by home assistant:

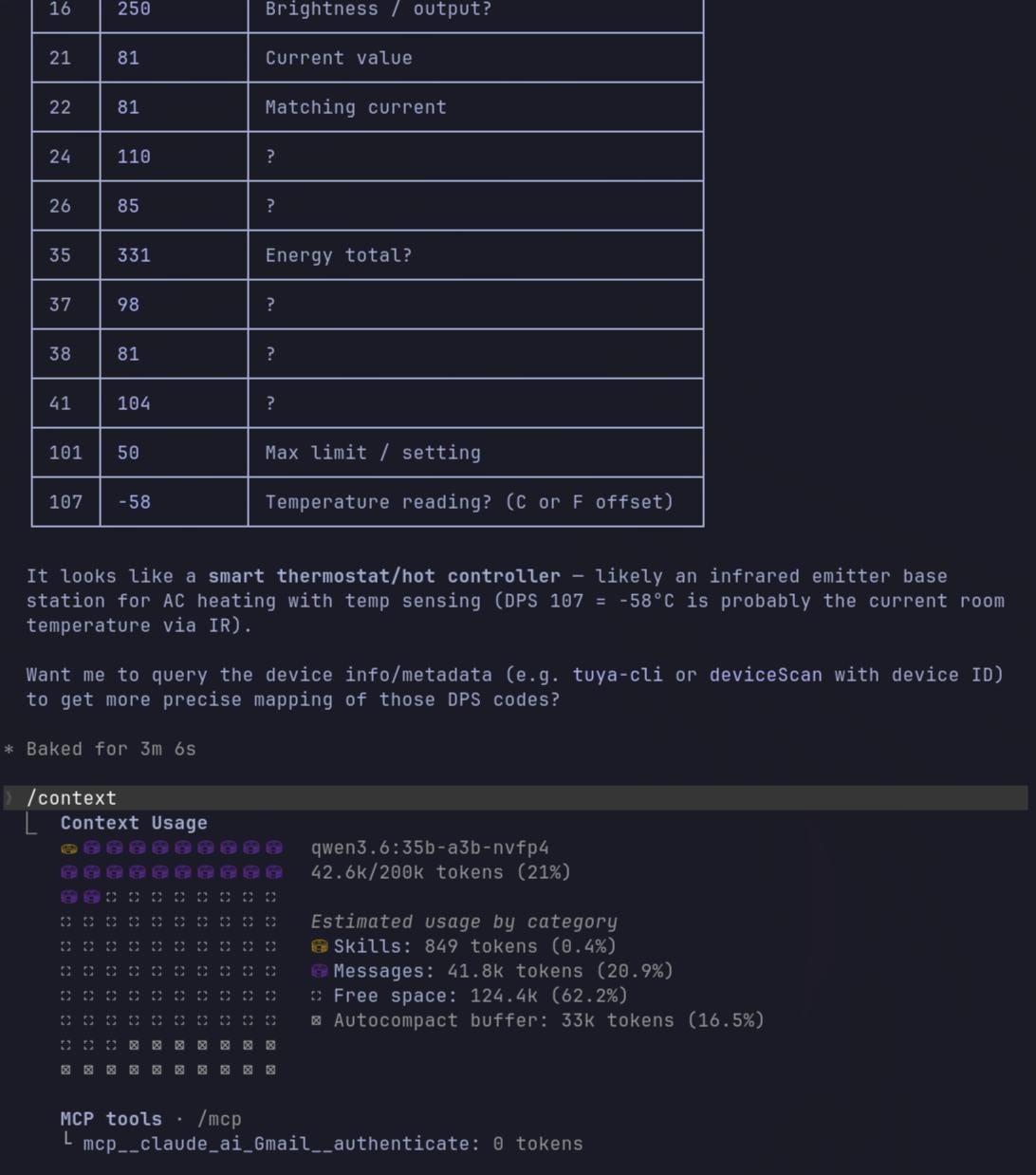

I also tried asking it to do something I mentioned in my last post, query a tuya device locally for me after pinging it.

Ended up with 42k context and outputted the details easily

Next I’ll have to give it some small coding tasks. The nice thing about this model is, it may actually save me $20-30 a month on my own personal claude plan. The main thing I use it for is managing a few mcps and some light coding.

I can probably use it to do that, and rely on the token rate for glm 5.1 or minimax 2.7 for other personal use for a couple bucks a month.

Check this model out yourself ASAP.

ollama run qwen3.6:35b-a3b-nvfp4The NVFP4 variant from ollama is what I used to get these results.